|

9/7/2023 0 Comments Torch permute

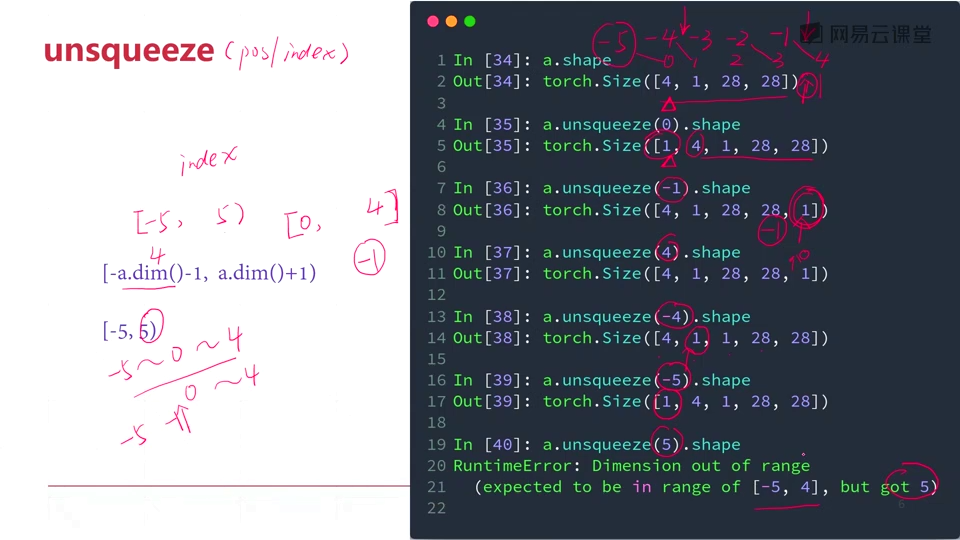

Out of the value, I can imply that the function did not shuffle the data. Permute class (dims: Listint) source This module returns a view of the tensor input with its dimensions permuted.

Local perm = torch.randperm(sids:size(1)):long() Path.loadarray Path.loadarray (p:pathlib.Path) Save numpy array to a compressed pytables file, using compression level lvlompression lib can be any of. The following is supposed to shuffle the data: if argshuffle then import numpy as np import matplotlib.pyplot as plt import torch import tensorflow as tf. I am new to torch, so I have some troubles figuring out how permutation works. Transposing and permuting tensors are a common thing to do. Parameters: tensor ( LongTensor) class values of any shape. I assumed that there should be a GPU kernel function for permutation in PyTorch/aten/src/ATen/native/cuda/, but I didn’t find it in either TensorTransformation. If dims is None, the tensor will be flattened before rolling and then restored to the original shape. Let’s take a look for an example: coding: utf-8 import torch inputs 1, 2 ,3, 4, 5, 6, 7, 8, 9, 10, 11, 12 inputs torch.tensor(inputs) print(inputs) print('Inputs:', inputs. Elements that are shifted beyond the last position are re-introduced at the first position. class Dataset(): def init(self, trainimages, trainlabels, trainboxes): self.images torch.permute (omnumpy (trainimages), (0,3,1,2)).float () self.labels omnumpy (trainlabels).type (torch.LongTensor) self.boxes omnumpy (trainboxes).float () def len(self): return len (self.labels) def getitem(self. permute () is mainly used for the exchange of dimensions, and unlike view (), it disrupts the order of elements of tensors. For example, while re-arranging a tensor storing an image in the form height, width, channel to channel, height, width before feeding this data to a neural network. roll (input, shifts, dims None) Tensor ¶ Roll the tensor input along the given dimension(s). This function returns a view of the original tensor, which means it does not. For example, if you have a tensor of shape (2, 3, 4), you can use torch.permute() to change its shape to (4, 2, 3) by swapping the first and the last dimension. It is quite useful when re-arranging the dimension of the tensor before feeding it to the network. The torch.permute() function is used to rearrange the dimensions of a tensor according to a given order. However, this modeĭoesn’t support any stride values other than 1.I am currently working in torch to implement a random shuffle (on the rows, the first dimension in this case) on some input data. Takes LongTensor with index values of shape () and returns a tensor of shape (, numclasses) that have zeros everywhere except where the index of last dimension matches the corresponding value of the input tensor, in which case it will be 1. torch.permute() permute(dims) is used to re-arrange the dimensions of a tensor. The input so the output has the same shape as the input. Padding='valid' is the same as no padding. Permute(p) x.permute(p).shape Outputs torch. Input – input tensor of shape ( minibatch, in_channels, i H, i W ) (\text, The Permute transformation in does not perform the expected permutation. This operator supports complex data types i.e. Extending torch.func with autograd.Function and test datasets : device vice ( ' cuda ' if.CPU threading and TorchScript inference.CUDA Automatic Mixed Precision examples.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed